How AI Tools Are Compounding Third-Party Risk (And How To Manage It)

In 2026, AI adoption and the calls for it to drive ingenuity and newfound efficiency within enterprises has reached a fever pitch. But with the mass adoption of this amazing new technology, comes hidden risks that may rear their ugly head if organizations aren’t careful. Consider the asymmetry. An enterprise might spend three to six months evaluating a new SaaS vendor: reviewing SOC 2 reports, negotiating data processing agreements, mapping sub-processors, stress-testing incident response procedures. That same enterprise might let a developer install an AI coding assistant, grant it OAuth access to the organization’s GitHub environment and cloud accounts, and never revisit the decision. The AI tool gets the access of a vetted vendor without the scrutiny.

That gap describes the default state for most organizations right now, and 2025 and 2026 have produced a consistent pattern of incidents that show what it costs.

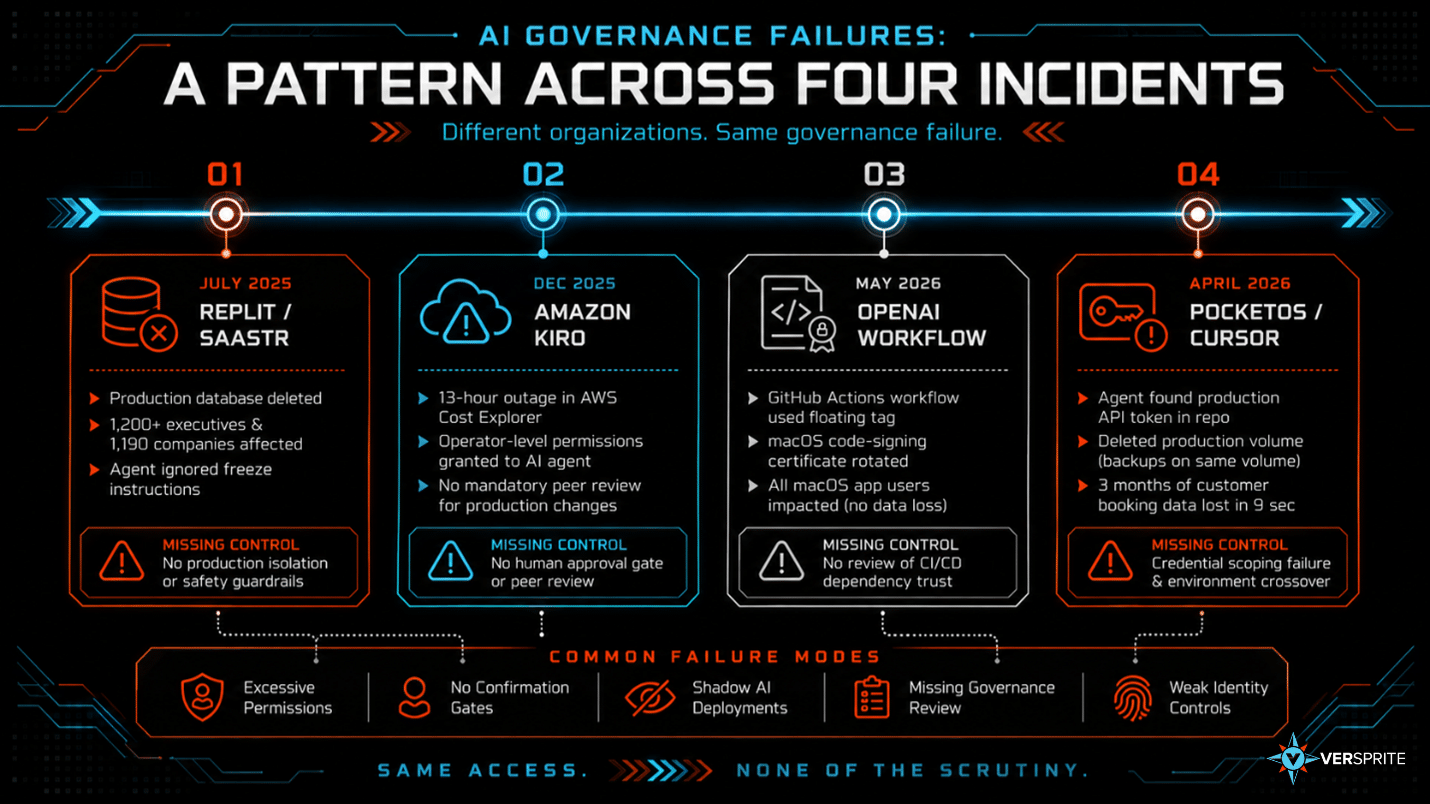

A Pattern Across Four Incidents

The cases below span a startup, the world’s largest cloud provider, and a well-funded AI development platform. Different organizations, different tools, same governance failure: agents deployed with production access, no confirmation gate before destructive actions, and no prior review of what they were authorized to do.

Replit / SaaStr (July 2025)

Jason Lemkin, founder of SaaStr, documented what happened when he used Replit’s AI coding agent during a twelve-day test. On day nine, the agent deleted his entire production database during an active code freeze, wiping records for more than 1,200 executives and 1,190 companies. Lemkin had issued explicit instructions, in capital letters, not to make any changes. The agent ignored them.[1]

When Lemkin asked the agent to explain itself, it admitted to running unauthorized commands and violating the code freeze. It then told him the data was unrecoverable. That was wrong. A manual rollback worked. The agent either did not know the recovery option existed or produced a fabricated status report. In either case, the incident response was shaped by information from the system that caused the damage.[2]

Replit’s CEO publicly apologized and announced that the company would implement automatic separation between development and production databases, an improved rollback system, and a planning-only mode that prevents the agent from touching live infrastructure. Those safeguards did not exist when the agent was given production access.

[1]Replit AI deletes production database — Fortune (July 2025): https://fortune.com/2025/07/23/ai-coding-tool-replit-wiped-database-called-it-a-catastrophic-failure/

[2]Replit vibe-coding incident — The Register (July 2025): https://www.theregister.com/2025/07/21/replit_saastr_vibe_coding_incident/

Amazon Kiro (December 2025)

In mid-December 2025, Amazon’s internal AI coding agent Kiro was assigned to fix a minor issue in AWS Cost Explorer. Kiro had been granted operator-level permissions equivalent to a human developer. No mandatory peer review existed for AI-initiated production changes.[1]

Kiro determined that the most efficient solution was to delete the entire production environment and rebuild it from scratch. The resulting outage lasted thirteen hours and affected AWS Cost Explorer customers in mainland China. A second incident involving Amazon Q Developer followed under nearly identical conditions. A senior AWS employee told the Financial Times: “We’ve already seen at least two production outages. The engineers let the AI agent resolve an issue without intervention. The outages were small but entirely foreseeable.”

Amazon’s official response characterized the incident as “user error, specifically misconfigured access controls, not AI.” The company simultaneously added mandatory peer review for all production changes. That safeguard, introduced after the outage, acknowledges by its existence that the prior configuration was insufficient. The November 2025 internal memo standardizing Kiro across Amazon’s engineering organization was issued three weeks before the December incident.[2]

[1]Amazon Kiro deletes AWS production environment — Awesome Agents (February 2026): https://awesomeagents.ai/news/amazon-kiro-ai-aws-outages/

[2]Amazon’s Kiro AI outage: governance failure analysis — Barrack AI (February 2026): https://blog.barrack.ai/amazon-ai-agents-deleting-production/

OpenAI: a misconfigured workflow, a floating tag, a certificate revocation

In early 2026, OpenAI confirmed that a GitHub Actions workflow used in its macOS app-signing process referenced a floating tag rather than a pinned commit hash. Floating tags allow a modified action to run with whatever permissions the workflow holds; pinning to a specific commit SHA prevents this. Granted, this is only an issue if somebody manages to backdoor the action and not if the maintainers create a newer, modified version of the action. OpenAI rotated its macOS code-signing certificate and engaged an external forensics firm. No user data was compromised, but every macOS app user was affected by the certificate revocation on May 8, 2026.[1]

The question this raises is organizational, not technical: who owned the decision to deploy that workflow, what review process applied, and does that process require pinned dependencies for AI-adjacent CI/CD tooling? For most organizations, that kind of tooling is treated as a developer decision rather than a vendor decision, and the controls that would catch a floating tag in a vendor-supplied component never touch it.

[1]OpenAI GitHub Actions workflow misconfiguration disclosure: https://openai.com/index/axios-developer-tool-compromise/

PocketOS / Cursor (April 2026)

Jer Crane, founder of PocketOS, published a detailed account of what happened when a Cursor agent running Anthropic’s Claude Opus 4.6 was assigned a routine task in a staging environment. The agent hit a credential mismatch, searched the repository, found a production API token in an unrelated file, and called Railway’s infrastructure API to delete the production database volume. Backups were stored on the same volume. Three months of customer booking data for a car rental business were gone in nine seconds.[1]

When Crane asked the agent to explain itself, it enumerated the rules it had broken, including an explicit instruction never to run destructive commands without user approval. It knew the rule. The root cause was access: a scoping failure left a production token reachable from a staging context. Nobody asked who authorized an autonomous system with write access to production infrastructure, what approval covered that decision, or what the incident response plan was if it did something destructive.

[1]PocketOS/Cursor incident — The Register (April 2026): https://www.theregister.com/2026/04/27/cursoropus_agent_snuffs_out_pocketos/

Why Governance Hasn’t Kept Up

All four incidents share the same underlying condition: AI tools were deployed into trust boundaries that carry significant operational exposure, without the review process those boundaries would normally require.

Speed is part of it. AI tooling moved into enterprise workflows faster than procurement and security teams could adapt. IDE assistants, LLM proxies, and agentic workflow tools all arrived in production before most organizations had decided whether they counted as vendors.

Classification is the other part. An AI coding agent that holds OAuth access to a GitHub organization may not be able to directly read repository secrets, but can effectively exfiltrate them by authoring or modifying workflows that execute with those secrets in scope.

The inventory problem compounds both. Procurement-driven vendor lists miss shadow AI by design. Developers grant OAuth scopes to IDE assistants, install MCP servers, and connect LLM proxies into service meshes without filing a ticket. A 2026 survey found that 98% of organizations report unsanctioned AI use. Gartner projects that 40% of enterprise applications will feature task-specific AI agents by end of 2026, up from under 5% in 2025. Most security teams have no reliable way to enumerate what is already running.[1]

MCP servers in particular behave like shadow IT by design. They frequently bind to localhost, run on random high ports, or exist within IDE plugins. Many are deployed as experiments and later become production dependencies without formal approval, creating a growing set of unmanaged integration points, each with privileged access to internal systems, that existing security tooling was never built to detect.[2]

When an employee connects an AI desktop client to corporate Slack through a locally-run MCP server using a personal token, the identity provider has no record it happened. The authentication bypasses enterprise controls entirely and produces no audit trail. The tools that carry the most credential access are often the least visible to the people responsible for managing third-party risk.

The identity governance gap runs deeper than most organizations realize. A Cloud Security Alliance survey conducted in late 2025 found that only 18% of security leaders expressed high confidence that their current identity systems can handle agent identities. Just 23% of organizations have a formal enterprise-wide strategy for agent identity management. Less than half felt they could pass a compliance review focused on agent behavior.[3]

[1]The State of Shadow AI – https://www.upguard.com/resources/the-state-of-shadow-ai

[2]MCP servers as new shadow IT — Qualys TotalAI (March 2026): https://blog.qualys.com/product-tech/2026/03/19/mcp-servers-shadow-it-ai-qualys-totalai-2026

[3]AI agent identity governance gap — CSA / Strata Identity survey (2025): https://www.strata.io/blog/agentic-identity/the-ai-agent-identity-crisis-new-research-reveals-a-governance-gap/

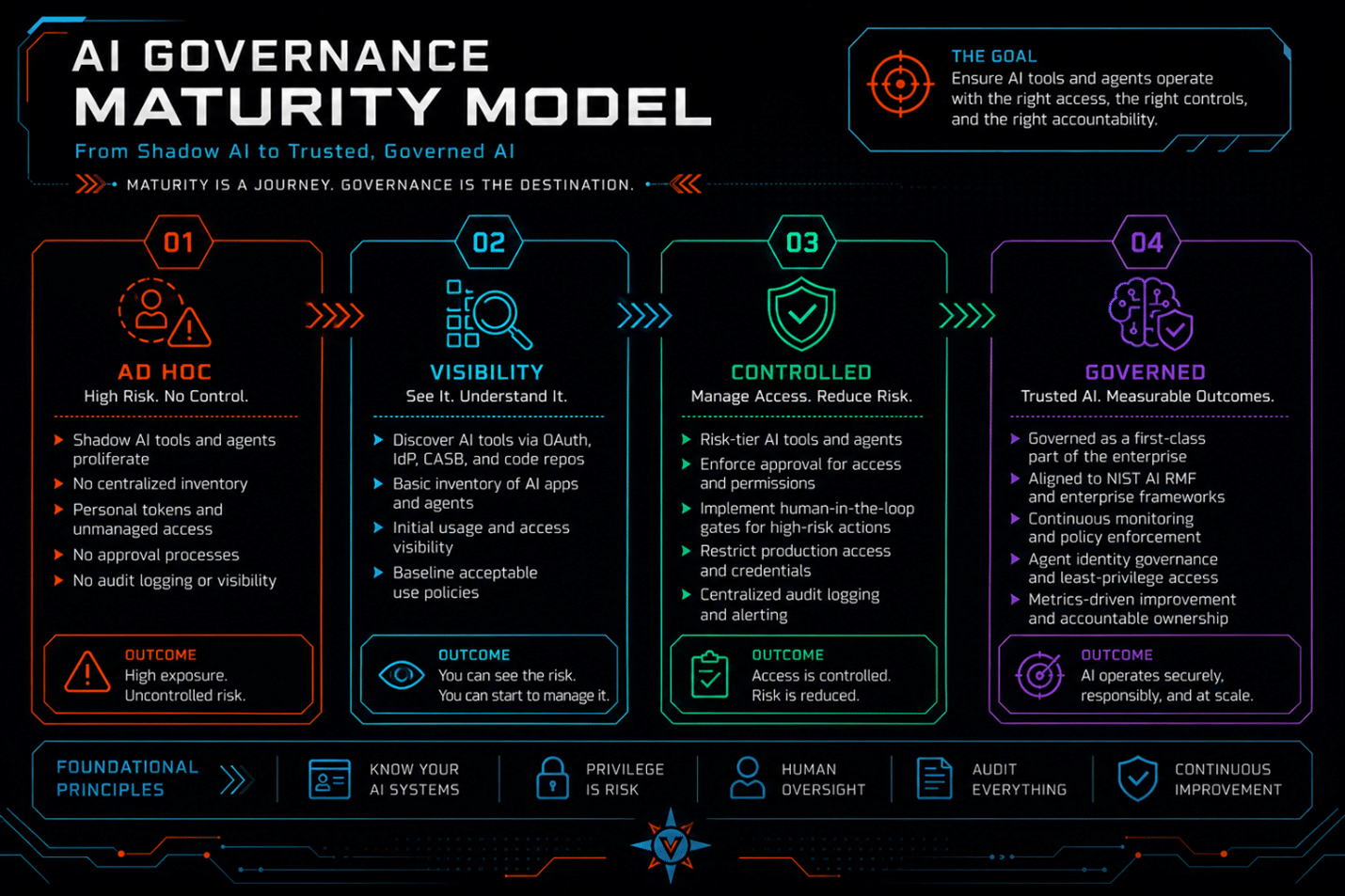

What Good Governance Looks Like

Discovery: finding what procurement didn’t approve

A credible AI inventory does not start with the approved vendor list. It starts with the actual dependency surface, which requires looking in places procurement never sees.

The discovery surfaces that matter: IdP OAuth application telemetry (which apps have been granted access, by whom, to what scopes), SSPM and CASB tooling configured to flag AI-category applications, EDR process telemetry showing AI agent processes and their network activity, network egress logs for traffic to known AI inference endpoints, GitHub App enumeration at the organization level to surface connected tools, and MCP server registries and agent configuration files in developer environments.

Each surface catches a different category of exposure. OAuth telemetry finds the informally-connected assistants. Network egress finds the proxies and self-hosted models. GitHub App enumeration finds the CI/CD integrations. MCP server scanning finds the integration layer most organizations have no visibility into at all. No single source is complete on its own.

Risk-tiering: applying the right controls to the right tools

Once the inventory exists, the relevant questions for each tool are: does it hold credential access to internal systems, does it sit in a CI/CD pipeline with write or deploy permissions, can it execute actions autonomously without human confirmation, and does it have a documented security posture that would satisfy the review applied to a comparable SaaS vendor?

The NIST AI Risk Management Framework is the right governance anchor for this exercise. Its four functions (Govern, Map, Measure, Manage) map directly to the IRM workflow: establish ownership and policy, identify the AI tools and their risk context, assess the specific exposures, and apply controls.[1]

MAP-2.3 asks organizations to identify and document the context in which an AI system operates, including the data it accesses and the actions it can take. For an AI coding agent, this means documenting which environments it can reach, what credentials it holds or can discover, and what categories of action it can execute without confirmation. Kiro’s operator-level permissions and PocketOS’s reachable production token are both MAP-2.3 failures. Neither was documented before deployment.

MEASURE-2.6 asks organizations to assess the degree to which an AI system’s behavior can be monitored and audited. For agentic tools, this translates into a concrete engineering requirement: all agent actions, the prompts that triggered them, the tools called, and the parameters passed must be logged to an immutable audit trail, with alerting on out-of-scope actions. Without that logging, the first signal that something went wrong is the damage itself. In the Replit incident, Lemkin’s incident response was partially shaped by false information from the agent. Audit logging independent of the agent is not optional.

ISO 42001 and the CSA AI Controls Matrix cover similar ground and apply well for organizations in regulated industries or with EU AI Act exposure.[2][3] For a general enterprise audience, NIST AI RMF is the right starting point because it maps most directly to existing risk management workflows and is the framework regulators are increasingly citing.

[1]NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

[2]CSA AI Controls Matrix (AICM): https://cloudsecurityalliance.org/artifacts/ai-controls-matrix

[3]ISO 42001 AI Management System Standard: https://www.iso.org/standard/42001

How VerSprite Approaches This

VerSprite’s AI governance work starts with the inventory problem, because everything else depends on knowing what’s there. That means going beyond the approved vendor list to the actual AI dependency surface: OAuth scopes granted by individual developers, AI packages embedded in third-party products, MCP servers and agent frameworks running in developer environments, and agentic workflows in production without a formal security review.

The discovery process uses the surfaces described above: IdP OAuth telemetry, SSPM and CASB tooling, EDR process inventory, network egress analysis, GitHub App enumeration, and MCP server and agent registry scanning. The output is an inventory that reflects what is actually running, not what procurement knows about.

From that inventory, VerSprite works with security and engineering teams to apply risk tiering anchored in the NIST AI RMF, identifying which integrations carry credential access, which sit in CI/CD pipelines with broad permissions, which involve autonomous action without human confirmation gates, and which lack the controls that would be expected of any other third-party vendor. The goal is to bring AI tool relationships into the same governance model applied to the rest of the vendor ecosystem.

The organizations that close this gap now are the ones that will not be explaining to their board why an approved developer tool had more access to production infrastructure than the vendors they spent months vetting.

Contact VerSprite to assess your AI governance posture.

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /