AI-Driven SecOps with Gemini: What Changes in SOC Operations

Security teams are not losing because they lack tools. They are losing because they lack time.

Attackers move quickly. Security Operations Center, or SOC, teams often have to investigate alerts across fragmented systems, incomplete context, and growing volumes of telemetry. The gap between detection and decision is where operational risk increases.

AI-driven SecOps is often described as a path toward automation. In practice, its value is more specific. AI changes how investigations begin, how analysts move through evidence, and how decisions are reviewed before action is taken.

Based on VerSprite’s experience working with Gemini in Google SecOps, the shift is not about replacing analysts. It is about helping analysts start with context, reduce repetitive triage work, and validate decisions faster.

What Is AI-Driven SecOps?

AI-driven SecOps is the use of artificial intelligence to support security operations workflows such as alert triage, investigation, query generation, case summarization, and decision support.

In a SOC environment, AI can help analysts by:

- Summarizing security cases

- Building event timelines

- Suggesting investigation paths

- Identifying likely false positives

- Supporting faster escalation decisions

- Highlighting areas that need human review

The purpose is not to remove the analyst from the process. The purpose is to reduce the time analysts spend gathering basic context so they can spend more time validating findings and making decisions.

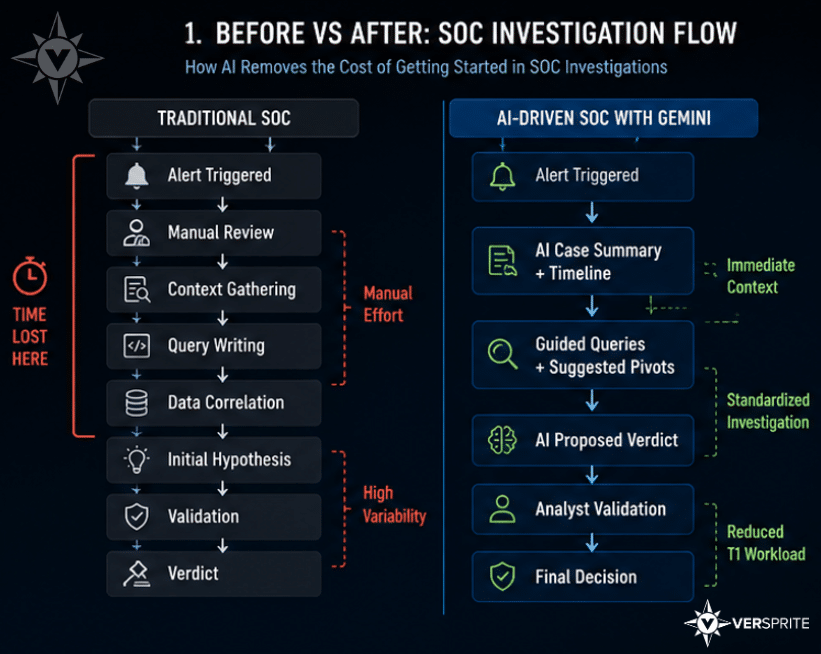

The Real Problem: Time Lost at the Start of an Investigation

In many SOC environments, the largest source of inefficiency is not detection. It is what happens immediately after an alert is created.

Even with Security Information and Event Management, or SIEM, and Security Orchestration, Automation, and Response, or SOAR, platforms in place, analysts still spend time answering basic questions:

- What triggered the alert?

- Which user, asset, or system was involved?

- What happened before and after the event?

- Which logs should be reviewed next?

- What query should be written to validate the hypothesis?

This early investigation friction adds up quickly.

Tier 1 analysts often spend much of their time reviewing repetitive alerts, building timelines, and separating false positives from cases that require escalation. When every alert starts from raw data, investigations slow down before they meaningfully begin.

The result is more than alert fatigue. It is delayed decision-making at scale.

What Changes with Gemini in Google SecOps?

The most immediate impact of Gemini in Google SecOps is not that it automatically finds more threats. The more practical change is that it lowers the cost of starting an investigation.

Instead of beginning with disconnected alerts and raw telemetry, analysts can begin with summarized context, suggested next steps, and an initial assessment that can be reviewed.

1. Investigations Start with Context Instead of Raw Data

In a traditional SOC workflow, analysts often begin by reviewing an alert and manually reconstructing the surrounding activity. This can include checking event logs, identifying affected assets, reviewing user behavior, and building a sequence of events.

With Gemini in Google SecOps, the analyst starts from a more complete view of the case.

That may include:

- A summary of what happened

- A structured timeline of events

- Relevant entities such as users, hosts, IP addresses, or applications

- Initial context around suspicious activity

- A proposed direction for investigation

This changes the analyst’s first question.

Instead of asking, “What is this alert?” the analyst can ask, “Does this summary accurately reflect what happened?”

That distinction matters. The analyst is still responsible for validating the case, but they are no longer starting from a blank page.

2. Querying Becomes Guided Instead of Fully Manual

Security investigations often depend on the analyst’s ability to write effective queries. This creates inconsistency across teams because query quality can vary by analyst experience, familiarity with the data, and knowledge of the environment.

AI-assisted query guidance helps reduce that variability.

Instead of writing every query from scratch, analysts can receive suggested queries that help them continue the investigation. These queries can guide pivots across relevant telemetry, such as authentication logs, endpoint activity, network traffic, or cloud events.

This helps SOC teams in two ways.

First, it reduces the amount of time spent constructing initial queries. Second, it creates more consistent investigation paths across analysts.

The goal is not to prevent analysts from writing their own queries. Experienced analysts still need the ability to test their own hypotheses. The value is in giving analysts a faster and more standardized starting point.

3. AI-Assisted Verdicts Reduce Tier 1 Workload

Gemini can provide a proposed verdict for a case, helping analysts determine whether an alert is more likely to be benign, suspicious, or worth escalation.

In practice, the analyst still reviews the evidence and validates the assessment. The AI-assisted verdict acts as a decision-support layer, not a final authority.

This is especially useful for Tier 1 workflows where analysts handle high volumes of repetitive alerts. Clear false positives can be reviewed faster, repeated patterns become easier to identify, and escalation decisions can happen with better context.

Over time, this can reduce the operational load on Tier 1 analysts and allow more attention to be directed toward cases that require deeper investigation.

What We Observed in Practice

One important observation is that AI-assisted verdicts can improve as the system is used and as more context becomes available.

Over time, correlation may become more accurate, recommendations may become more relevant, and repeated patterns may be easier to recognize. This can improve the efficiency of triage and tuning.

However, AI outputs are not perfect.

There are still edge cases where the model may struggle. Context can be misinterpreted. A case summary may appear reasonable but miss an important environmental detail. A proposed verdict may require additional validation before it can be trusted.

This reinforces a critical principle for AI-driven SecOps:

AI can accelerate decisions, but it should not independently validate them.

Why Human-in-the-Loop Review Still Matters

Security decisions carry operational consequences. A false positive can waste analyst time, but a false negative can allow risk to persist. For that reason, AI-generated analysis should remain subject to human review.

A human-in-the-loop process means analysts remain responsible for validating outputs before decisions are made.

In this model:

- AI summarizes the case

- AI suggests investigative paths

- AI may propose a verdict

- Analysts validate the reasoning

- Quality assurance remains manual

- Final decisions are not fully automated

This approach keeps AI in the role where it is most useful: accelerating investigation and organizing context.

It also keeps humans in the role where they are most necessary: judgment, validation, and accountability.

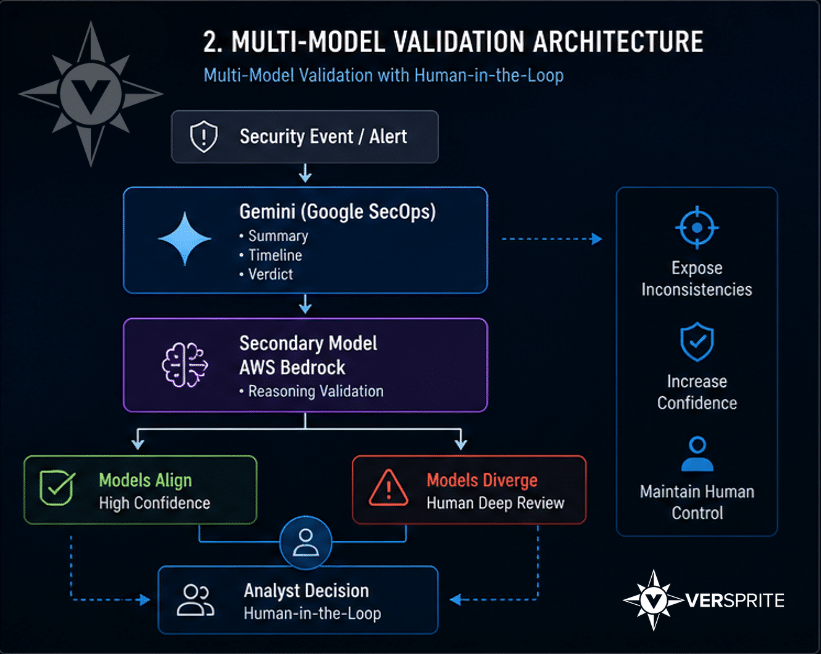

Multi-Model Validation: A Practical Safeguard

VerSprite does not rely on a single model to make security decisions.

While Gemini can support summaries, investigation paths, and proposed verdicts, VerSprite adds a separate validation layer by reviewing AI-generated analysis through another model using AWS Bedrock, Amazon’s managed foundation model service.

This creates a multi-model validation workflow.

In this workflow:

- One model generates the narrative, reasoning, and proposed verdict

- A second model evaluates the analysis from another perspective

- Analysts review inconsistencies before making a decision

The objective is not simply to make both models agree. The objective is to expose differences in reasoning.

If both models align, confidence may increase. If they diverge, that divergence becomes a signal for deeper human review.

This helps reduce reliance on a single model, improves review of edge cases, and supports more controlled decision-making.

The Operational Impact of AI-Driven SecOps

When implemented carefully, AI-driven SecOps can change SOC operations in practical ways.

The impact includes:

- Faster case triage because analysts begin with context

- Shorter investigations because query paths are easier to follow

- Reduced Tier 1 workload through AI-assisted filtering

- Faster identification of repeated false positives

- Improved tuning opportunities based on repeated patterns

- More analyst time spent on validation and decision-making

The most important change is not that AI does the analyst’s job. It is that AI reduces the time spent collecting and organizing information.

That gives analysts more time to assess whether the information is accurate, complete, and actionable.

AI Does Not Create an Autonomous SOC

The idea of a fully autonomous SOC is often overstated.

Security operations still require judgment, business context, environmental knowledge, and accountability. AI can help organize evidence and accelerate workflows, but it cannot fully replace the reasoning required to make security decisions.

A more realistic model is layered:

- AI accelerates investigation

- Multiple models help validate reasoning

- Analysts review and approve decisions

- Quality assurance remains part of the process

This model improves speed without removing control.

Conclusion: AI Reshapes the SOC, But Analysts Remain Central

AI-driven SecOps is most valuable when it reduces uncertainty early in the investigation process.

Gemini in Google SecOps helps analysts begin with context, follow guided investigation paths, and review proposed verdicts. Multi-model validation adds another layer of scrutiny, while human-in-the-loop review ensures that final decisions remain accountable.

The value of AI in the SOC is not in replacing analysts. It is in helping analysts reach better-validated decisions faster.

In security operations, time defines risk. Reducing the time between alert and decision is where AI can make a measurable difference.

FAQ

What is AI-driven SecOps?

AI-driven SecOps uses artificial intelligence to support security operations tasks such as alert triage, case summarization, query generation, investigation guidance, and decision support.

How does Gemini help SOC analysts?

Gemini helps SOC analysts by summarizing cases, creating timelines, suggesting investigative queries, and providing proposed verdicts that analysts can review and validate.

Does AI replace SOC analysts?

No. AI can accelerate investigation workflows, but analysts are still needed to validate context, review reasoning, assess risk, and make final decisions.

What is human-in-the-loop security?

Human-in-the-loop security means AI can assist with analysis, but a human analyst remains responsible for reviewing outputs and approving final decisions.

Why use multiple AI models in SecOps?

Using multiple AI models can help identify inconsistencies in reasoning. If two models produce different interpretations of the same case, that difference can signal the need for deeper analyst review.

What is the main benefit of AI in SOC operations?

The main benefit is faster access to context. AI helps analysts spend less time gathering information and more time validating evidence and making decisions.

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /