DPRK IT Workers: The Operational Reality Most Organizations Are Missing

Democratic People’s Republic of Korea (North Korea) (DPRK) IT worker activity is often reduced to hiring fraud, insider threat, or remote work abuse. That framing is too narrow.

The more accurate view is this: DPRK IT worker operations are designed to create legitimate access inside organizations while avoiding the signals security teams are trained to detect.

- The worker gets hired.

- The credentials are valid.

- The endpoint is clean.

- The work may appear real.

- The activity often looks normal.

That is what makes the model effective.

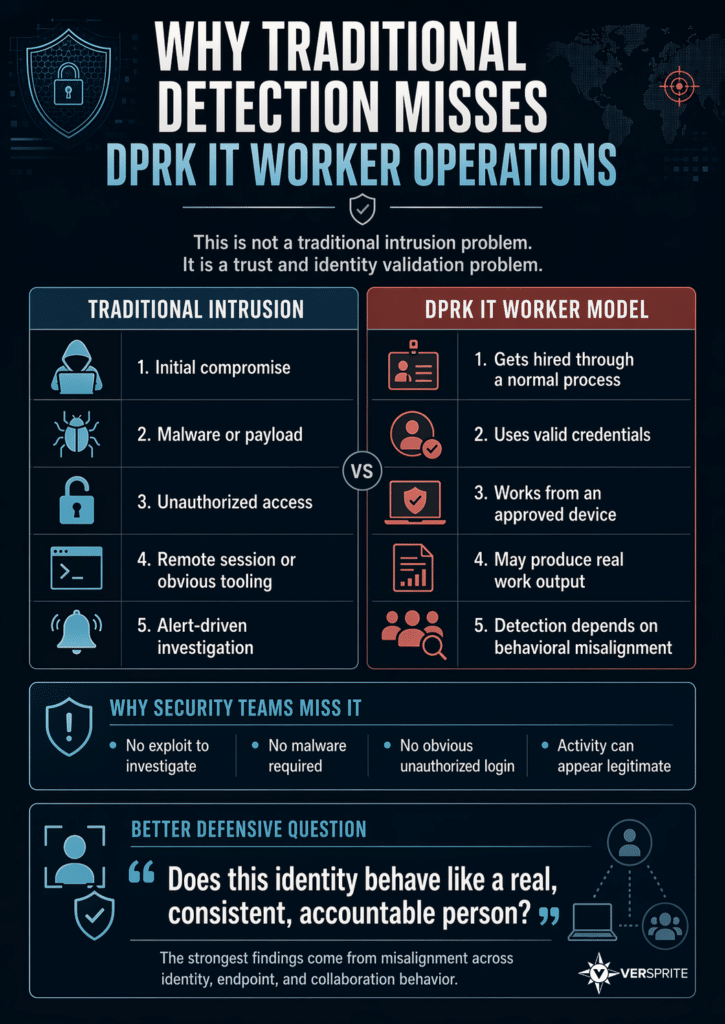

This is not a traditional intrusion problem. It is a trust problem. The organization believes it has hired a legitimate employee or contractor. Security tooling sees approved identity, approved access, and approved activity. The risk develops inside that assumption.

U.S. government agencies have warned that North Korean IT workers obtain remote employment while posing as non-DPRK nationals, often to generate revenue for the regime. The FBI has also warned that these workers use identity obfuscation, U.S.-based facilitators, and company access to sustain the scheme.

The defensive question is no longer only, “Is something malicious happening?”

The better question is, “Does this look like a real, consistent person operating this identity over time?”

Why DPRK IT Worker Operations Evade Traditional Detection

Most security programs are built around compromise.

They look for exploitation, malware, suspicious processes, remote access tools, lateral movement, command and control, privilege escalation, and data staging. Those signals matter, but they are not always present in DPRK IT worker operations.

In many cases, the activity begins with a successful hiring process.

The individual may pass interviews, provide documentation, complete onboarding, receive a corporate device, and access systems using approved credentials. From the environment’s perspective, there may be no initial compromise event.

- There is no obvious payload.

- There is no unauthorized login.

- There may be no malware.

- There may be no lateral movement.

- There may be no single event that looks clearly malicious.

The operation depends on legitimacy. Once that legitimacy is granted, the attacker benefits from the same assumptions applied to every other employee.

That is why many organizations struggle to detect the activity after onboarding. The identity appears valid, the access is expected, and the endpoint may not produce the kinds of alerts defenders normally rely on.

The Role of Hardware-Based Access

One of the more important patterns in this threat model is the use of hardware-based access, including KVM over IP, commonly referred to as IPKVM.

IPKVM devices are legitimate tools. They are commonly used for remote administration, data center operations, and hands-on system control when software-based access is unavailable or undesirable.

The tool itself is not the issue.

The issue is how it changes the detection model.

An IPKVM can provide keyboard, mouse, and video control at the hardware layer. From the endpoint’s perspective, the activity may look like a local user interacting with the machine. There may be no remote desktop session, no installed remote access agent, and no obvious software process that indicates remote control.

That creates a blind spot.

If defenders are looking only for RDP, remote management tools, suspicious remote sessions, or endpoint agents associated with remote access, they may not see anything meaningful. The system appears clean because, technically, it may be clean.

The user is not necessarily bypassing the endpoint. They are using the endpoint through a path many endpoint controls are not built to observe.

Why “Clean Endpoint” Does Not Mean “Valid User”

A clean endpoint can be misleading in this scenario.

Security teams often treat the absence of malware, suspicious tooling, and exploit activity as an indicator that the user is legitimate. For DPRK IT worker operations, that assumption can fail.

The endpoint may be clean because the operator does not need to compromise it. The organization already issued the device, approved the account, and granted access.

The risk is not that the machine was taken over in a traditional sense. The risk is that the identity operating the machine may not be who the organization believes it is.

This changes how defenders should interpret evidence.

Instead of asking only whether the system is compromised, defenders need to evaluate whether the identity, behavior, collaboration patterns, and working conditions align with a real employee or contractor.

That requires looking across multiple layers:

- Identity usage

- Endpoint interaction

- Collaboration behavior

- Meeting presence

- Access patterns

- Work output

- Time-based consistency

- Device and network context

- Hiring and onboarding records

None of these signals may be decisive alone. Together, they can reveal misalignment.

The Human Presence Problem

Hardware-based access can make system interaction appear local, but it does not fully solve the problem of human presence.

Modern work requires more than typing code, moving files, or responding to messages. Employees are expected to appear in meetings, participate in real-time collaboration, speak consistently, respond naturally, and maintain continuity across many interactions.

That creates friction for this operational model.

For example, many IPKVM implementations do not natively provide a clean way to integrate real-time webcam presence. They can replicate keyboard, video, and mouse interaction, but they do not automatically solve live identity presentation.

This creates a gap between two realities:

The system looks like it is being operated locally.

The employee is still expected to appear as a real person in real time.

Historically, operators could rely on avoidance. Camera off. Audio issues. Bandwidth problems. Delayed responses. Limited meeting participation.

That avoidance is becoming less reliable as organizations become more aware of remote worker fraud and identity-based risk.

Tradecraft Is Adapting

DPRK IT worker tradecraft continues to evolve.

Some operators now attempt to close the human presence gap through staged video workflows, remote video relays, virtual camera configurations, and other techniques designed to present a controlled identity during interviews or meetings.

This does not make the operation invisible. It makes it more complex.

Complexity creates failure points.

A staged video setup may introduce inconsistencies in lighting, timing, audio quality, eye movement, camera behavior, device configuration, or collaboration patterns. A remote operator may be able to maintain the appearance of presence in isolated interactions, but maintaining consistency across hiring, onboarding, meetings, productivity tools, endpoint use, and identity systems is much harder.

Recent public reporting and government actions have shown continued concern around North Korean remote IT worker schemes, including the use of facilitators, laptop farms, fraudulent identities, and remote work infrastructure to obtain employment at U.S. companies.

The defensive opportunity is not always found in one obvious indicator. It is found in the accumulated inconsistencies.

Where DPRK IT Worker Activity Shows Up

There is rarely a single indicator that exposes the operation.

Instead, defenders tend to see weak signals that do not make sense in isolation.

Examples include:

- Systems that appear local but do not behave like a single-user environment

- Users who are consistently active but inconsistently present

- Endpoint tooling that does not clearly match the role but is not overtly malicious

- Meeting behavior that differs from work activity

- Identity usage that appears technically valid but operationally inconsistent

- Collaboration patterns that feel shared, staged, or unusually controlled

- Work output that is present but disconnected from normal team behavior

- Repeated friction around live interaction, camera use, voice continuity, or real-time validation

Each of these can have a benign explanation.

That is the challenge.

The goal is not to overreact to one anomaly. The goal is to recognize when the same identity produces too many inconsistencies across too many systems over time.

Detection Requires a Different Question

Most detection pipelines are designed to answer a familiar question:

“Is something malicious happening?”

That question works for malware, intrusion, exploitation, and many forms of account compromise. It works less well when the activity is performed through approved access by an identity the organization has already accepted.

For DPRK IT worker operations, a better question is:

“Does this identity behave like a real, consistent, accountable person?”

Answering that requires more than endpoint telemetry.

Defenders need to correlate:

- Hiring records

- Interview behavior

- Identity verification outcomes

- Device issuance

- Endpoint activity

- Authentication patterns

- Collaboration data

- Meeting participation

- Manager feedback

- Code or work output

- Access to sensitive systems

- Changes in behavior over time

This is not simple alert triage. It is identity validation under operational conditions.

The strongest findings often come from misalignment between systems that are usually reviewed separately.

For example, endpoint data may look normal. Identity data may look normal. Collaboration activity may look normal. But when reviewed together, they may not describe one coherent person.

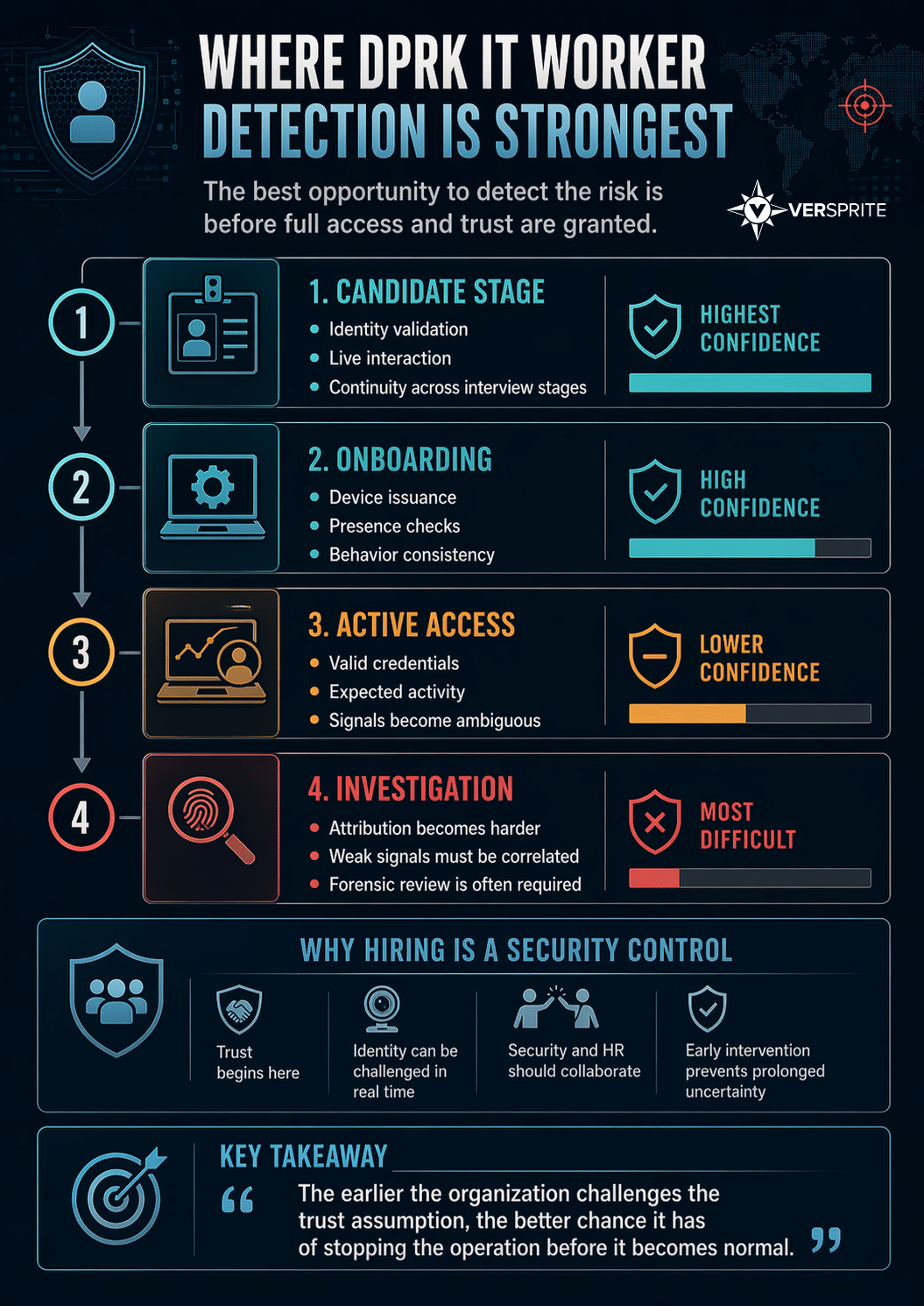

Why the Best Detection Point Is Before Hiring

The most reliable detection point is often before the worker is hired.

That may sound like an HR issue. It is not. For this threat model, hiring is a security control.

Before onboarding, the operator has not yet received full trust. They have not been issued a corporate device. They do not have persistent access. They have not built a history of expected behavior. They have not become part of normal operations.

This is when the model is weakest.

The candidate must demonstrate identity, presence, communication, technical ability, and continuity under real-time conditions. Those requirements are difficult to replicate at scale, especially across multiple interviewers, different interaction formats, and repeated checkpoints.

Once the person is onboarded, the defensive problem becomes harder.

- The credentials are valid.

- The access is expected.

- The endpoint may be clean.

- The work output may appear legitimate.

- The activity may have plausible explanations.

At that stage, the organization is no longer preventing risk. It is trying to resolve ambiguity after trust has already been granted.

Hiring Must Be Treated as a Security Control

Organizations should treat hiring and onboarding as part of the security boundary.

That does not mean turning recruiters into investigators or slowing every hiring process unnecessarily. It means recognizing that remote hiring is now a targeted access path.

A mature hiring security process should include:

- Real-time identity validation beyond static documentation

- Multiple live interaction checkpoints across the hiring process

- Consistency checks between interviews, onboarding, and early work behavior

- Clear escalation paths between HR, security, legal, and hiring managers

- Validation of device shipping addresses, work location claims, and identity artifacts

- Review of unusual candidate patterns, including repeated camera avoidance or inconsistent presence

- Security involvement for roles with access to source code, production systems, customer data, financial systems, or sensitive internal platforms

The objective is not to create friction for legitimate candidates. The objective is to make identity, presence, and continuity harder to fake.

Why Post-Access Detection Breaks Down

After onboarding, the attacker gains several advantages.

- They have valid credentials.

- They may have an approved device.

- They may produce real work.

- They may not need malware.

- They may avoid obvious remote access tools.

- They may operate within expected business workflows.

This creates ambiguity.

A suspicious login can be investigated. A malware alert can be triaged. A blocked exploit can be analyzed. But a valid user doing expected work from an approved device is much harder to classify.

Even strong detection teams can end up chasing weak signals. Those signals are still useful, but they may not be definitive.

As tradecraft improves, post-access indicators become less reliable. Operators can improve video presence, standardize communication patterns, avoid obvious tooling, and align activity more closely with expected work behavior.

That does not mean detection is impossible. It means detection must be based on coherence, not just maliciousness.

Response Matters When Detection Is Ambiguous

When the evidence is unclear, response becomes critical.

The question is not always, “Can we prove this is malicious right now?”

The better operational question is, “Is the behavior consistent with a legitimate user operating this identity?”

If the answer is no, waiting for stronger evidence may allow the activity to normalize. The longer the identity remains trusted, the harder attribution becomes. The worker builds history, gains access, and creates plausible explanations for inconsistencies.

In these cases, organizations need a response process designed for identity ambiguity.

That process should include:

- Preserving endpoint evidence

- Reviewing identity and authentication logs

- Correlating collaboration activity

- Validating meeting and communication patterns

- Reviewing device shipping, setup, and access history

- Limiting access while the investigation is active

- Reconfirming identity under controlled real-time conditions

- Determining whether the activity reflects one consistent operator or shared access

This is different from traditional incident response. The goal is not only to find malware or prove compromise. The goal is to determine whether the identity itself is valid.

Controls That Still Matter

Traditional controls still matter, but their role changes.

- Least privilege is important because it limits what a valid identity can access.

- Detailed logging is important because it allows reconstruction when behavior becomes questionable.

- Endpoint telemetry is important because it can show patterns of use, tooling, and interaction.

- Identity controls are important because they help expose impossible travel, inconsistent authentication behavior, and unusual access paths.

- Collaboration data is important because it shows whether the person behaves consistently across meetings, messages, and work.

- Hiring controls are important because they can stop the operation before trust is granted.

No single control solves the problem. The defense is layered and cross-functional.

The organizations most likely to detect this activity are not necessarily the ones with the most alerts. They are the ones that can connect hiring, identity, endpoint, and collaboration signals into one coherent view.

Common Mistakes Organizations Make

Many organizations continue to treat DPRK IT worker activity as a narrow hiring issue or a standard insider threat problem.

- That leads to several mistakes.

- They assume valid credentials mean valid identity.

- They assume a clean endpoint means a legitimate user.

- They assume work output confirms the employee is real.

- They assume HR owns the hiring risk alone.

- They wait for the SOC to detect something clearly malicious.

- They investigate signals separately instead of correlating identity, endpoint, and collaboration behavior.

- These assumptions are exactly what the model exploits.

The operation works because it fits inside normal business processes. It does not need to break every control. It only needs the organization to trust the wrong identity.

Practical Defensive Questions

Organizations can improve their posture by asking direct operational questions:

- Can we validate that this candidate is the same person across every hiring stage?

- Can we confirm real-time presence, not just static identity documents?

- Do interview behavior, onboarding behavior, and early work activity align?

- Are there repeated issues around camera use, audio, presence, or availability?

- Does endpoint activity look like one consistent local user?

- Does collaboration activity match the claimed role, schedule, and work location?

- Are there signs that one identity is being operated by multiple people?

- Can security, HR, and hiring managers escalate concerns quickly?

- Do we have enough logging to reconstruct activity if the user’s legitimacy is questioned?

These questions are not about paranoia. They are about validating trust before and after access is granted.

Final Assessment

DPRK IT worker operations do not primarily exploit a technical vulnerability.

They exploit a trust model.

They take advantage of the assumptions that organizations make when someone passes an interview, receives credentials, uses an approved device, and produces expected work.

As long as organizations assume that a hired worker is legitimate, valid credentials equal valid identity, and system activity reflects a real person, this model will continue to work.

The defensive shift is not only about better alerts.

It is about validating whether the entity operating inside the environment is consistent, coherent, and real.

That validation should begin before access is granted. It should continue through onboarding. It should be reinforced by identity, endpoint, collaboration, and response processes that are designed to detect misalignment over time.

The earlier the organization challenges the trust assumption, the better chance it has of stopping the operation before it becomes normal.

FAQ

What are DPRK IT workers?

DPRK IT workers are North Korean information technology workers who seek remote employment while concealing their true identity, nationality, or location. Public advisories from U.S. government agencies warn that these workers may pose as non-DPRK nationals to obtain jobs and generate revenue for the North Korean regime.

Why are DPRK IT worker operations difficult to detect?

They are difficult to detect because the activity often begins with legitimate hiring. The worker may use valid credentials, approved devices, and normal business workflows. Traditional security tools may not see malware, exploitation, or unauthorized access because the organization has already granted trust.

Are DPRK IT workers the same as insider threats?

They can create insider-like risk, but the model is different. A traditional insider is usually a legitimate employee who becomes malicious or negligent. In this model, the problem may be that the identity was never valid in the first place.

Why does hiring matter in cybersecurity?

Hiring matters because it is the point where organizational trust begins. If a threat actor can pass hiring and onboarding, they may receive credentials, devices, access, and internal legitimacy. For DPRK IT worker risk, hiring and onboarding are security controls.

What is an IPKVM and why does it matter?

An IPKVM is a hardware-based tool that allows remote keyboard, video, and mouse control of a system. In this threat model, it matters because activity may appear local to the endpoint, reducing the usefulness of detections focused only on software-based remote access tools.

What should organizations look for?

Organizations should look for misalignment across identity, endpoint, collaboration, hiring, and behavioral signals. The issue is rarely one clear indicator. It is usually a pattern of inconsistencies that suggests the identity may not represent one real, consistent person.

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /